Having looked through the calibration code things, it’s great for what it does, but I don’t think it would help much with anti-cogging during actual rotation at significant speed. Cogging at lower speeds for precise angles is mostly taken care of by the sensor itsself and the control loop, I think. It depends a lot on what you want to do. For me, I want smooth continuous torque to keep the noise down. People making smart knobs want something similar but at much lower speeds. People with higher power motors may wish to reduce vibration to more practical levels to reduce noise and wear, perhaps. People making robots are probably concerned with more intermediate speeds I would think, which seems to me to be the hardest problem.

The information from the current sensor calibration would not help much with that, except to use it for subsequent data gathering because you have a more accurate sensor.

My understanding is that cogging is mostly about torque, which will bias the rotor position relative to the electrical angle, and which will cause acceleration of the rotor to vary depending on it’s angular position. There are some papers on google scholar that discuss some methods, and on hackaday some people doing lower cost experiments to compensate for cogging and there is definitely a ton of promise. However the measurements need to be taken while the motor is moving at considerable speed I think, not the very slow motion currently used by the angle calibration.

What the angle calibration code appears to do is command the electrical angle to X position, and then give it a short time for the rotor to assume the equilibrium position. It then assumes that the rotor is in fact in that position, the same as the magnetic field, in the absence of cogging, would lead to. It builds it’s lookup table. then during run time, it uses the table backwards, taking the angle reading and going back to what the electrical angle would have been. And there is some clever stuff with interpolation between the calibration table points which is definitely good.

However I think this might actually be worsening the actual motion of the motor, because during the calibration the motor is not in fact going to the position commanded. You are essentially baking in cogging, not undoing it. I think if you took a proper encoder or something and used it to compare the angles of the magnetic sensor that was “calibrated” to the readings of the encoder you would actually find that the calibrated sensor is better in some ways but worse in others. However this is just a hypothesis and I’m sure there is a good chance I’m totally wrong.

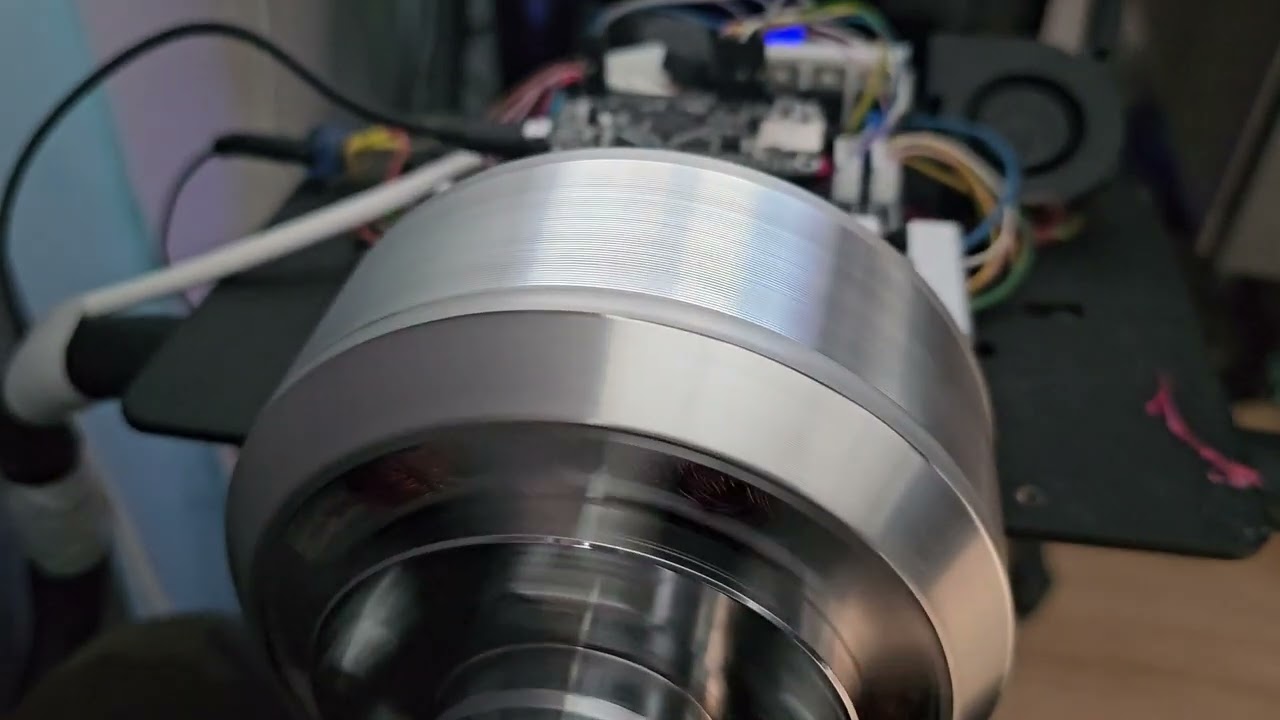

I think a better approach to anti cogging is to carefully align the sensor and magnet, and to rotate the motor at significant speed, ideally with a circular disk attached to increase angular momentum, and adjust the voltage waveform until the sensor reads the motion as being as smooth as it can be, measured by the area under the curve or something. There may need to be different waveforms for different speed regimes. You are compensating for acceleration ripple - torque, assuming the mass of the rotor and it’s geometry/moment of inertia is the same at all times.

Then you would calibrate the sensor by rotating it a ton of times at considerable speed, and carefully timing the intervals between reads, and assume the rotational speed was smooth and constant, in order to adjust for sensor eccentricity/magnet misalignment. Take lots of samples and average things out, to reduce noise. You only have to do this once for each sensor/motor assembly.

I don’t know how much you really need to do this, I think if the sensor and magnet are reasonably properly built it’s probably going to be pretty good. Using a larger diametrically magnetized magnet helps too, I have read. I honestly don’t know why these sensors are so touchy, it seems to me that a compass works pretty well and they should compare favorably, but… instead they need the magnetic field to be neither too strong or too weak etc.